NVIDIA's Billion-Dollar Bet on Owning the AI Factory

Key insights

- NVIDIA is investing in a company that competes with it. Marvell makes custom AI chips that rival NVIDIA's own GPUs. By putting $2 billion in, NVIDIA flips the dynamic: the rival becomes part of the ecosystem, locked in financially and strategically.

- More than $11 billion in six months across optics, chip design, cloud, and telecom reveals a deliberate supply-chain strategy. Jensen Huang is not just selling chips. He is buying control over every layer that the AI buildout depends on.

- Jensen said openly for the first time that enterprise software margins may come down. Oracle and SAP run at 70-90% margins because software needs no factories or power plants. AI changes that math by making hardware and energy unavoidable.

This is an AI-generated summary. The source video may include demos, visuals and additional context.

In Brief

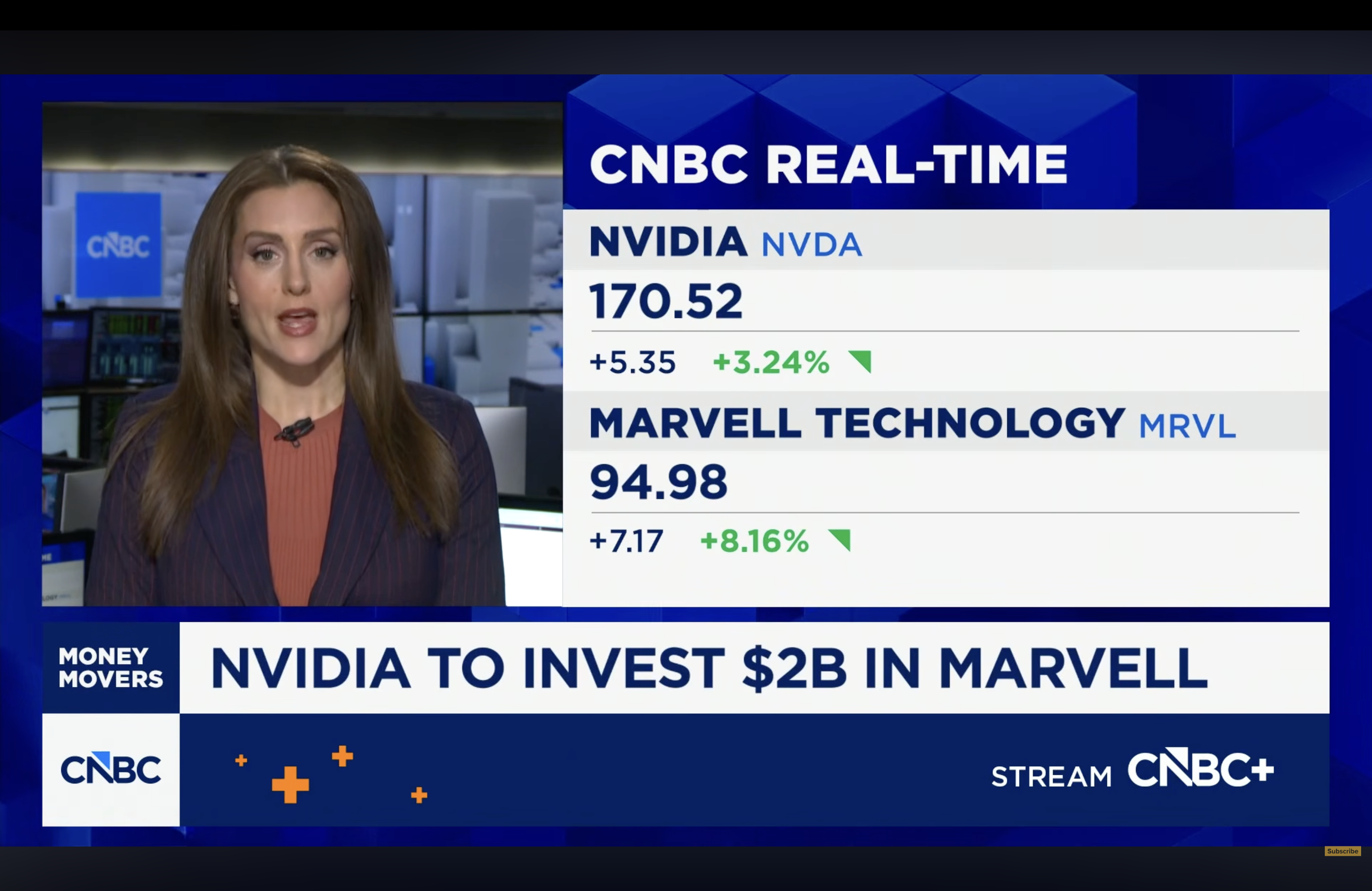

NVIDIA has invested $2 billion in Marvell Technology, a chip company that designs custom AI chips for clients like Amazon. The news sent Marvell's stock up more than 8%. But this is not an isolated deal. It is the latest in a series of billion-dollar investments NVIDIA has made over the past six months, totaling more than $11 billion across optics, cloud, chip design, and telecom. CNBC reporter Kristina Partsinevelos reports that Jensen Huang, CEO of NVIDIA, has been telling investors at every opportunity that NVIDIA is no longer just a chip company. It is an AI infrastructure firm trying to own the entire AI factory, end to end.

Related reading:

The Marvell deal and the paradox inside it

Marvell Technology does something unusual: it designs custom AI chips (called custom silicon) for large tech companies like Amazon. These chips are built specifically for one customer's needs, unlike NVIDIA's standard GPUs (Graphics Processing Units, the powerful chips that do most of the work in AI systems). Custom chips often compete directly with NVIDIA's GPUs, which makes this deal look strange at first.

NVIDIA just invested $2 billion in a company that competes with it. The key is NVLink Fusion, NVIDIA's platform (a set of technologies that lets different components work together) that allows chips from other manufacturers to plug into NVIDIA's infrastructure. So instead of losing business when a cloud provider builds its own custom chip, NVIDIA can now say: no matter what chip you use, your system still runs on our network and our software. As Jensen Huang told CNBC exclusively: "Together, we'll be able to address a much, much larger TAM."

TAM stands for Total Addressable Market, meaning the total pool of potential customers and revenue a company could reach. By welcoming competing chips into its ecosystem, NVIDIA grows its market instead of defending a shrinking share.

Six months, $11 billion, one strategy

The Marvell deal is the latest, but far from the first. CNBC's reporting lays out the full investment wave from September 2025 to March 2026:

- OpenAI: up to $100 billion (September 2025)

- Intel: $5 billion stake (September 2025)

- Nokia: $1 billion (October 2025)

- Synopsys: $2 billion (December 2025)

- Nebius: $2 billion (March 2026)

- Lumentum and Coherent: $2 billion each (March 2026)

- Marvell Technology: $2 billion (March 2026)

The list covers every layer of the AI supply chain. Lumentum and Coherent make optical networking components, which use light instead of copper cables to move data faster between chips in a data center. Synopsys makes the software that chip designers use to create new chips. Nebius is an AI cloud company. Nokia supplies the broader telecom network. Intel and Marvell make competing chips.

Put together, this is not a shopping spree. It is a deliberate strategy to lock in the suppliers that build the key parts of the AI buildout. Every company that takes NVIDIA's money becomes financially and strategically tied to NVIDIA's ecosystem.

What Jensen Huang actually means by "AI factory"

Jensen Huang uses a specific phrase at almost every public event: NVIDIA is "not a chip company, but rather an AI infrastructure firm" trying to own the entire AI factory.

The word "factory" is deliberate. A factory does not just produce one component. It controls the full process, from raw materials to finished product. For NVIDIA, the AI factory includes the chips, yes, but also the optical networking between chips, the interconnects (the cables and interfaces that link different components), the custom silicon that runs alongside GPUs, and the software that ties it all together.

The investment strategy makes NVIDIA the unavoidable center of that factory. It does not matter whose chips end up on the racks inside an AI data center. If NVIDIA has invested in the company that made those chips, the optical components connecting them, and the design tools used to build them, NVIDIA's ecosystem surrounds the entire thing.

The warning about software margins

The most surprising comment in the segment came from something Jensen said in an exclusive interview with CNBC. Kristina Partsinevelos flagged it as significant: Jensen suggested that margins for enterprise software companies may come down.

This matters because enterprise software companies have unusually high profit margins. Companies like Oracle and SAP run at margins between 70 and 90%. The reason is that software is asset light: once you write the code, you can sell it to millions of customers without building a factory, running a power plant, or maintaining physical inventory. Revenue keeps coming in through subscriptions and licenses while costs stay relatively flat.

Jensen's argument is that AI breaks this model. Every time a company uses AI for anything going forward, it needs hardware and energy to run it. There is no asset-light AI. Large Language Models (LLMs, the type of AI that powers tools like ChatGPT) require significant compute to operate. That compute costs money and uses electricity.

He said this out loud for the first time. Partsinevelos noted it explicitly: this was a big comment, and a new one. For the CEO of the company selling that hardware to say it publicly signals that Jensen sees this as a structural shift, not a temporary bump in costs for software companies.

Glossary

| Term | Definition |

|---|---|

| Custom silicon | AI chips designed specifically for one customer, like Amazon's chips built for its own data centers. Different from standard chips sold to anyone. |

| NVLink Fusion | NVIDIA's platform that lets chips from other companies plug into NVIDIA's infrastructure, like a universal adapter for AI chips. |

| TAM (Total Addressable Market) | The total market a company could potentially reach. A larger TAM means more possible revenue. |

| Optical networking | Technology that uses pulses of light instead of copper cables to move data between chips, which is faster and uses less energy. |

| Interconnects | The cables and interfaces that physically link different chips and components inside a data center. |

| Asset light | A business model that requires little physical infrastructure. Software companies are asset light because they do not need factories or warehouses. |

| LLM (Large Language Model) | A type of AI trained on vast amounts of text that can read, write, and reason. The technology behind ChatGPT and similar tools. |

Sources and resources

Want to go deeper? Watch the full video on YouTube →