Trump Bans Federal Use of Anthropic AI

Key insights

- Trump framed the dispute as a national security threat, calling Anthropic 'left-wing nut jobs' and threatening civil and criminal consequences

- Replacing Claude across Pentagon systems could take six months to over a year, with no alternative vendor currently on the classified cloud

- OpenAI's Sam Altman expressed similar red lines the same day, but Bloomberg reports OpenAI is already involved in Pentagon autonomous drone programs

This is an AI-generated summary. The source video may include demos, visuals and additional context.

In Brief

President Trump ordered every federal agency to immediately stop using Anthropic's AI technology, hours before a Pentagon-imposed deadline expired. The order includes a six-month phase-out period (a transition window for agencies to switch away from a vendor's technology), but Trump threatened civil and criminal consequences if Anthropic does not cooperate. Bloomberg reporter Katrina Manson explains on CBS News why replacing Claude across military systems is far more complex than switching chat apps, and why no alternative vendor is ready to fill the gap.

Background: how we got here

Anthropic, the company behind the AI model Claude, has been working with the Pentagon since 2025. Its models were the first AI tools to reach the Pentagon's classified cloud, a secure computing environment that meets military-grade security requirements, and Claude became integrated into military systems including the Maven Smart System, a military operating platform used for intelligence processing and operational planning by U.S. troops in the Middle East and Indo-Pacific.

The relationship broke down over contract terms. The Pentagon demanded unrestricted access to Claude for "all lawful uses," including future autonomous weapons programs. Anthropic refused, drawing two specific red lines: no autonomous targeting (where AI selects and attacks targets without human approval) of enemy combatants, and no mass surveillance of U.S. citizens. A meeting between Anthropic CEO Dario Amodei and Defense Secretary Pete Hegseth failed to resolve the impasse.

The Pentagon then escalated with threats of a supply-chain-risk designation (a formal label that restricts a company's access to government contracts) and potential use of the Defense Production Act (a U.S. law giving the government emergency powers to compel companies) to force compliance. That set the stage for what happened next.

What happened

The Pentagon sent Anthropic a best-and-final offer (a take-it-or-leave-it proposal with no further negotiation) on contract terms the day before. Anthropic refused, stating it could not grant the military unrestricted access to its technology. Hours before the government's 5:00 PM deadline, Trump posted on social media.

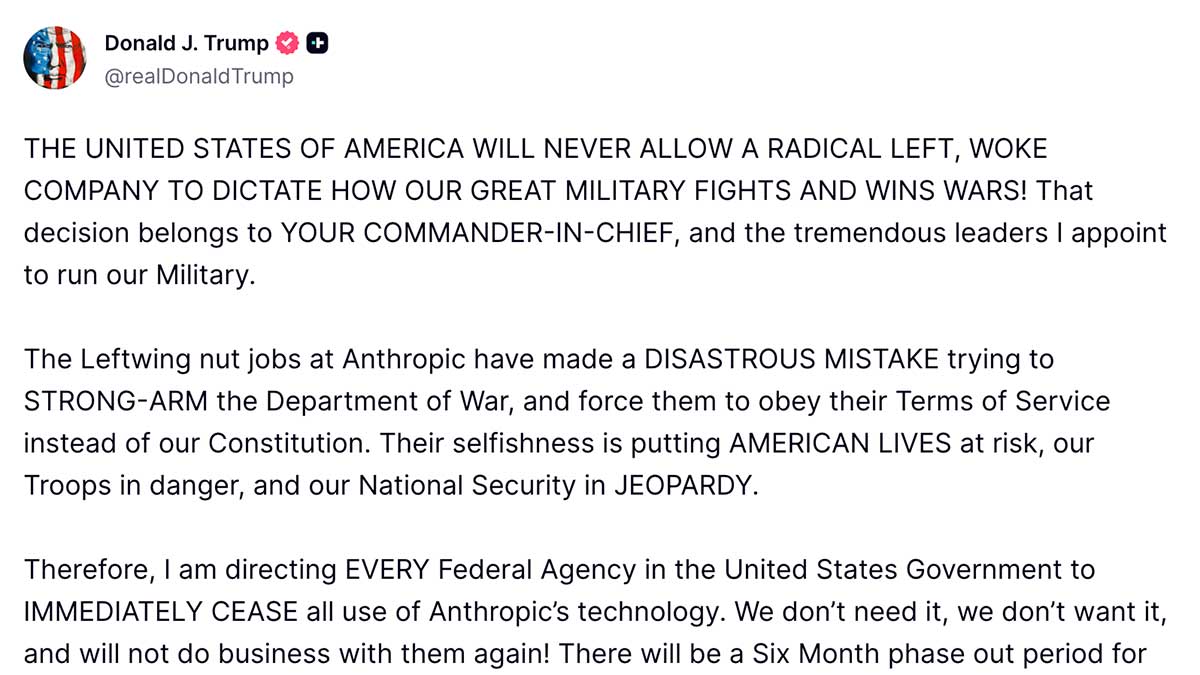

Trump's full statement, posted on Truth Social:

Screenshot: Truth Social / @realDonaldTrump

THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS! That decision belongs to YOUR COMMANDER-IN-CHIEF, and the tremendous leaders I appoint to run our Military.

The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. Their selfishness is putting AMERICAN LIVES at risk, our Troops in danger, and our National Security in JEOPARDY.

Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic's technology. We don't need it, we don't want it, and will not do business with them again! There will be a Six Month phase out period for Agencies like the Department of War who are using Anthropic's products, at various levels. Anthropic better get their act together, and be helpful during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow.

WE will decide the fate of our Country — NOT some out-of-control, Radical Left AI company run by people who have no idea what the real World is all about. Thank you for your attention to this matter. MAKE AMERICA GREAT AGAIN!

PRESIDENT DONALD J. TRUMP

The post called Anthropic "left-wing nut jobs" and accused the company of trying to "strongarm the Department of War." Trump directed every federal agency to "immediately cease all use of Anthropic's technology" and warned of "major civil and criminal consequences" if the company does not cooperate during the transition (0:30).

Manson notes that Trump preempted his own Secretary of War with this announcement. The Pentagon had been considering two options: cutting Anthropic out or forcing compliance through wartime authorities. Trump chose the first option (2:01).

Why replacing Claude is harder than switching apps

Manson emphasizes a critical operational detail: Anthropic's Claude is already threaded through multiple Pentagon platforms, most notably the Maven Smart System used by U.S. troops in the Middle East and Indo-Pacific (2:43).

Bloomberg has reported the transition could take at least six months. Others estimate over a year (4:29).

The problem is not just removing Claude. It is finding a replacement:

| Vendor | Classified cloud status | Readiness |

|---|---|---|

| Anthropic (Claude) | On classified cloud | Being phased out |

| xAI (Grok) | Just agreed to access | No operational track record on military systems |

| OpenAI | On some classified premises, not the classified cloud | Not fully integrated |

Palantir provides the interface (Maven Smart System), but Claude powers the AI reasoning layer underneath, the component that actually thinks through intelligence data and helps plan operations. Swapping that layer affects how troops actually work, not just which app icon they click.

Manson reports that Pentagon users are fond of Claude specifically because the codebase (the underlying source code) is simpler and easier to work with (5:27). One Pentagon worker told her they prefer Claude, but if it has to be replaced, even with something worse, AI is improving fast enough that they are prepared to accept the trade-off (5:40).

The OpenAI paradox

On the same day, OpenAI's Sam Altman publicly stated that he shares similar red lines about military use of his technology (5:52). The Wall Street Journal had reported that Altman may be trying to broker his own deal with the Pentagon.

Manson describes a tension in that position. Bloomberg reporting shows that OpenAI was named in a successful submission for a Pentagon prize challenge worth $100 million, involving voice-controlled autonomous drone swarming technology (coordinated groups of drones that operate together without individual human control) (7:09). OpenAI told Bloomberg they would only be involved in the voice-control element, not the autonomous part. But Manson says she saw documents indicating OpenAI's role included mission control and orchestration (managing and coordinating drone operations) (7:42).

Manson's assessment: as the Pentagon accelerates its AI and autonomy programs, the line between acceptable and unacceptable involvement is becoming increasingly gray for all vendors (8:00).

The real dispute: autonomous weapons

The underlying conflict is not about the current use of Claude. Anthropic was fine with the Pentagon's existing use of its technology in Maven Smart System (3:06).

The dispute is about the future. The Pentagon wants to move aggressively toward autonomous drones, including lethal autonomous systems. Anthropic does not want its technology to be part of that (3:21).

Manson adds important context: Dario Amodei had been publicly supporting national security use cases. Anthropic's models were the first AI tools to reach the Pentagon's classified cloud. The company had leaned further forward than most competitors (3:45).

Timing and operational risk

The segment notes that U.S. troops in the Middle East are currently using Maven Smart System with Claude embedded. The U.S. military presence in the region is described as the strongest since the Iraq war, amid ongoing negotiations with Iran (8:13).

Manson does not believe any immediate operational risk exists: the six-month phase-out means nothing changes overnight, and Palantir's platform can access other AI models (8:34). But the timing is described as stark, given that Anthropic is likely supporting active U.S. operations overseas at this moment (9:03).

Glossary

| Term | Definition |

|---|---|

| Maven Smart System | A military operating platform produced by Palantir, used by U.S. troops for intelligence processing and operational planning. Claude is integrated as the AI reasoning layer. |

| Classified cloud | A secure cloud computing environment meeting defense-grade security requirements. Only AI vendors with classified cloud access can be used in sensitive military operations. |

| Autonomous drones | Unmanned aircraft systems capable of operating with limited human intervention. Lethal variants can select and engage targets, making them one of the most contested topics in military AI policy. |

| Defense Production Act | U.S. law granting the federal government emergency powers to compel companies to prioritize national defense needs. Mentioned as a potential tool to force Anthropic's compliance. |

| Phase-out period | A transition window allowing agencies to migrate away from a vendor's technology. Trump's order specifies six months for the Anthropic removal. |

| xAI (Grok) | Elon Musk's AI company. The Pentagon has recently agreed to bring Grok onto the classified cloud, but the system lacks operational track record in military contexts. |

Sources and resources

Want to go deeper? Watch the full video on YouTube →