Anthropic's Leaked Mythos Model Rattles Cybersecurity

Key insights

- The leak itself is a credibility problem: Anthropic is building an AI model specifically designed for cybersecurity defense, yet exposed ~3,000 unpublished internal assets through a simple CMS misconfiguration.

- Nikesh Arora bought $10 million in Palo Alto Networks stock during the selloff, signaling confidence that AI will expand demand for cybersecurity rather than replace it.

- Anthropic's plan to give defenders early access to Mythos before the general public is the right instinct, but the leaked details may have already reduced whatever head start defenders would have had.

This is an AI-generated summary. The source video may include demos, visuals and additional context.

In Brief

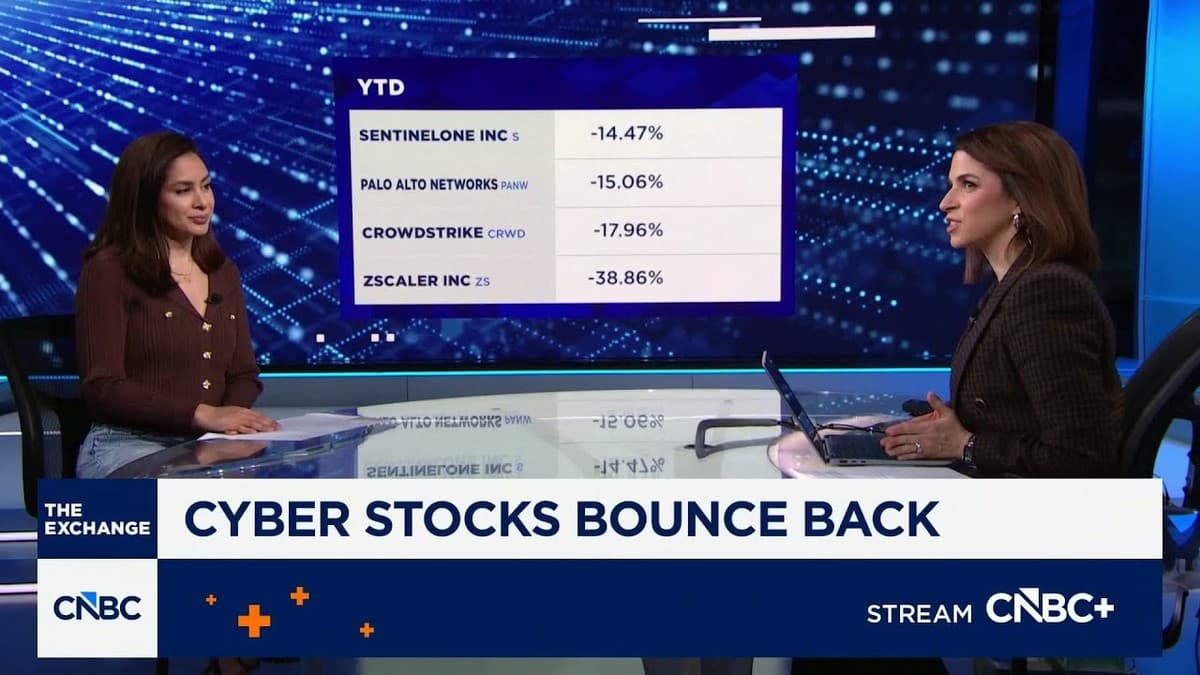

Anthropic is testing a new AI model called Claude Mythos (internal codename: Capybara), rumored to debut in April. The model was supposed to stay secret, but around 3,000 unpublished internal assets were accidentally exposed through an unsecured CMS (Content Management System, the software Anthropic uses to manage its website content). Security researchers Roy Paz from LayerX Security and Alexandre Pauwels from the University of Cambridge discovered the leak. Fortune first reported the story on March 26. The news sent cybersecurity stocks falling: SentinelOne dropped 14.47% year-to-date, Palo Alto Networks fell 15.06%, CrowdStrike lost 17.96%, and Zscaler dropped 38.86%.

Related reading:

What is Mythos?

Anthropic's leaked draft blog post described Mythos as "the most powerful AI model we've ever developed", a significant jump over Claude Opus 4.6 (the current most capable model in the Claude family). According to the leaked materials, Mythos scores dramatically higher on coding, reasoning, and cybersecurity benchmarks.

The term "step change" appeared in Anthropic's own draft materials, meaning this isn't a small incremental update but a major leap in capability.

That description is exactly what alarmed the cybersecurity industry.

Why cybersecurity companies are worried

Palo Alto Networks CEO Nikesh Arora published a blog post on March 30 laying out the concern bluntly. A single bad actor, one person working alone, will now be able to run cyberattack campaigns that once required entire teams of skilled hackers. Arora wrote that every desktop computer will effectively behave like a server, likely running unsupervised AI tools close to sensitive systems. The attack surface, all the possible entry points a hacker can exploit, keeps growing mostly unnoticed.

In the same post, Arora called on Anthropic and OpenAI to release advanced capabilities responsibly, and specifically to make sure defenders (security teams protecting systems) have been consulted before any public launch.

Arora also put his money where his mouth is: he bought $10 million in Palo Alto Networks stock last Friday, during the very market selloff his blog post was describing.

The big question for investors

CNBC's Seema Mody put the core dilemma clearly: will powerful large language models replace cybersecurity companies, or will they actually increase demand for them?

There are two ways to read the situation.

Bear case: If an AI model can do the work of a full security team, companies might buy less security software. Why pay for a team of analysts when an AI does it cheaper?

Bull case: A more dangerous threat landscape means every company needs more protection, not less. The total addressable market (TAM, the total pool of money companies spend on security) grows as the threat grows. Arora's $10M stock purchase signals he believes in the bull case.

Anthropic has said it plans a defender-first rollout: giving security defenders early access to Mythos before releasing it to the general public. The idea is to give the good guys a head start.

The irony of the leak

There is a hard-to-miss irony here. A company building what it describes as a breakthrough AI for cybersecurity defense accidentally exposed thousands of internal assets through a basic misconfiguration of its own website software. If securing a CMS is this difficult, securing AI-powered systems at scale will be much harder.

The leak does not change what Mythos can do. But it does raise a legitimate question about whether the planned defender-first advantage has already been partially lost. The details are now public. Anyone paying attention knows what is coming.

Glossary

| Term | Definition |

|---|---|

| CMS (Content Management System) | Software used to create and manage website content, like a filing cabinet for web pages. Anthropic's internal CMS was misconfigured, making draft materials publicly visible. |

| Attack surface | Every possible way a hacker could try to break into a system: open ports, software bugs, weak passwords, misconfigured tools. |

| Large Language Model (LLM) | An AI system trained on vast amounts of text that can generate human-like responses, code, and analysis. Claude, GPT-4, and Gemini are examples. |

| Total addressable market (TAM) | The total amount of money companies spend on a product or service globally. A bigger TAM means more revenue potential. |

| Defender-first rollout | A release strategy where security defenders (companies protecting systems) get early access to new tools before the general public. |

Sources and resources

- CNBC Television — New Anthropic model rumored to bring disruption to cybersecurity sector (YouTube)

- Fortune — Anthropic says it's testing Mythos after data leak

- Fortune — Anthropic's leaked AI model and cybersecurity risk

- CNBC — Cybersecurity stocks react to Anthropic AI Mythos

- CNBC — Nikesh Arora buys $10M in Palo Alto Networks shares

- Axios — Claude Mythos and cyberattack concerns

- Euronews — What is Anthropic's Mythos?

- Palo Alto Networks

- Anthropic

Want to go deeper? Watch the full video on YouTube →