How Anthropic's PMs Use Claude to Skip the Queue

Key insights

- When PMs can query databases directly, the data science team stops being a bottleneck and becomes a strategic partner. That's an org-structure shift, not just a productivity gain.

- PMs building their own test suites means product quality becomes proactive rather than reactive. The gap between 'I think this works' and 'I measured that this works' gets smaller.

- METR data shows AI went from handling 21-minute tasks to 12-hour tasks in just 16 months: a 41x jump. Traditional roadmaps assume stable technology, but the ground is rising fast.

- Lisa's framing is the key one: this isn't about doing the same things faster. It's about doing things she couldn't do independently before. That's a qualitative shift, not a quantitative one.

This is an AI-generated summary. The source video may include demos, visuals and additional context.

In Brief

Lisa Crofoot, a Product Manager at Anthropic, demos two everyday workflows that show how the company's own PMs use Claude. First: querying a product database in plain English and getting a polished chart in seconds, no SQL knowledge needed. Second: expanding a handful of test cases into 50 in minutes. Both examples come from a short video in Anthropic's "How Anthropic uses Claude" series, paired with a companion blog post by Cat Wu, Head of Product for Claude Code.

Related reading:

Getting data used to mean waiting in line

Lisa is direct about the problem: "Getting data as a product manager is like a pain point." Normally, a PM who wants to understand something about their product (say, how many users prefer dark mode) has two options. Either ping a data science colleague and wait for their availability, or attempt to write SQL (a special query language used to pull information from databases) against a database you barely know. Neither option is fast or independent.

Anthropic's data science team solved this by setting up a BigQuery MCP. BigQuery is Google's cloud database service for large datasets, like a gigantic spreadsheet stored in the cloud. MCP (Model Context Protocol) is a standard that lets AI tools connect directly to external data sources, like a universal adapter. Together, they gave Claude Code a live connection to Anthropic's product data tables.

Now Lisa can just ask in plain English.

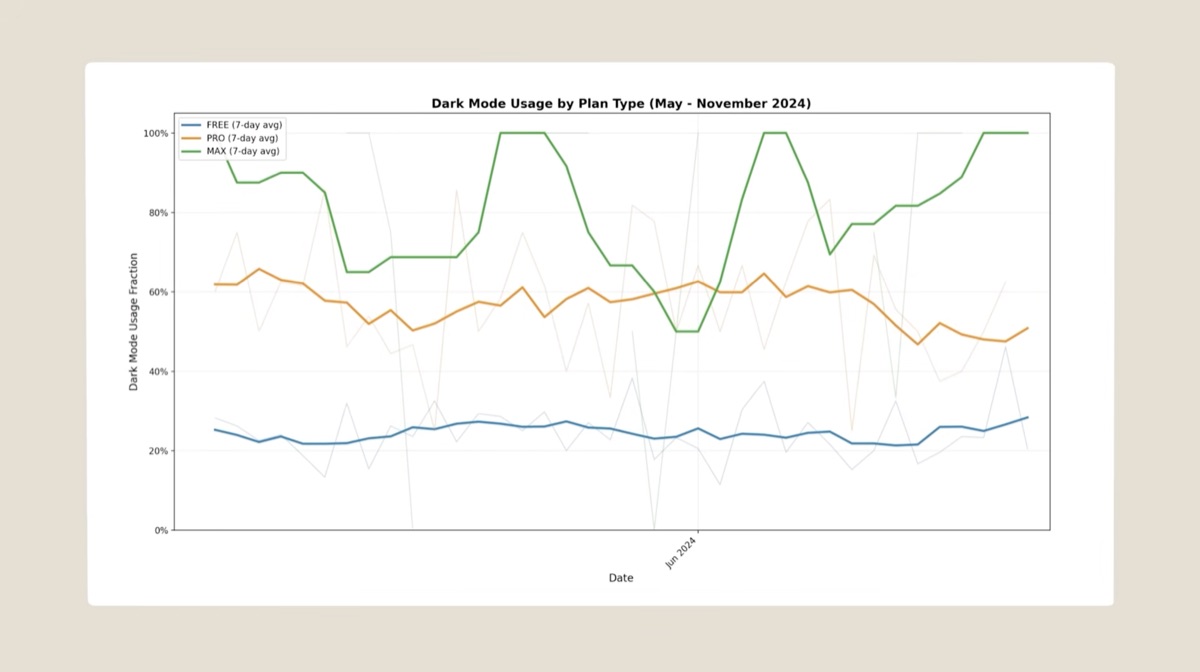

The demo: dark mode usage in seconds

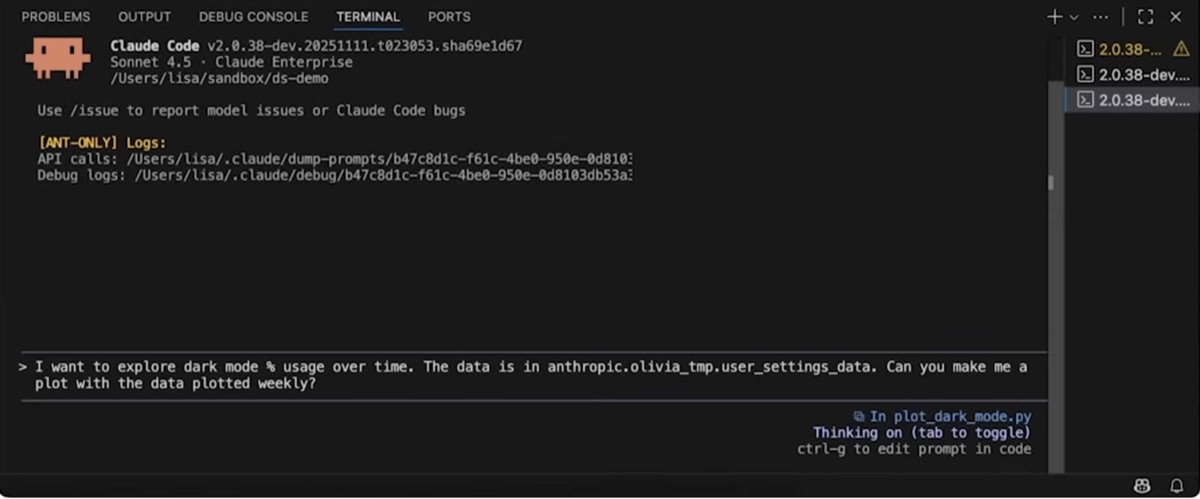

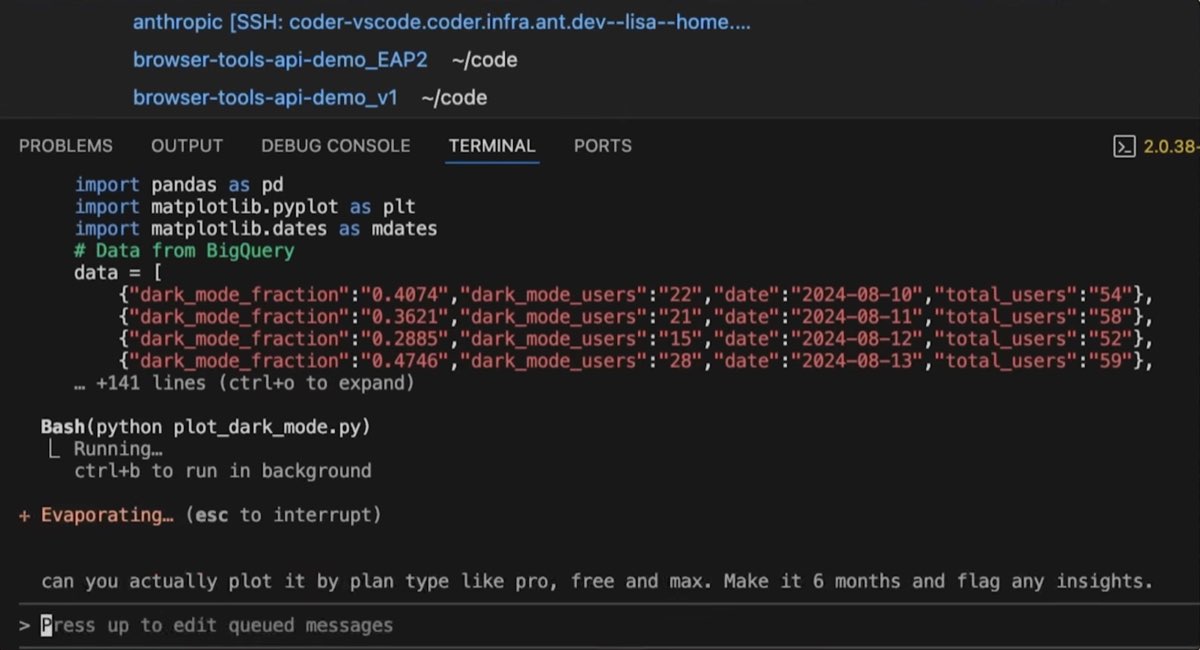

In the video, Lisa types a question into Claude Code asking it to explore the fraction of dark mode usage over the past three months.

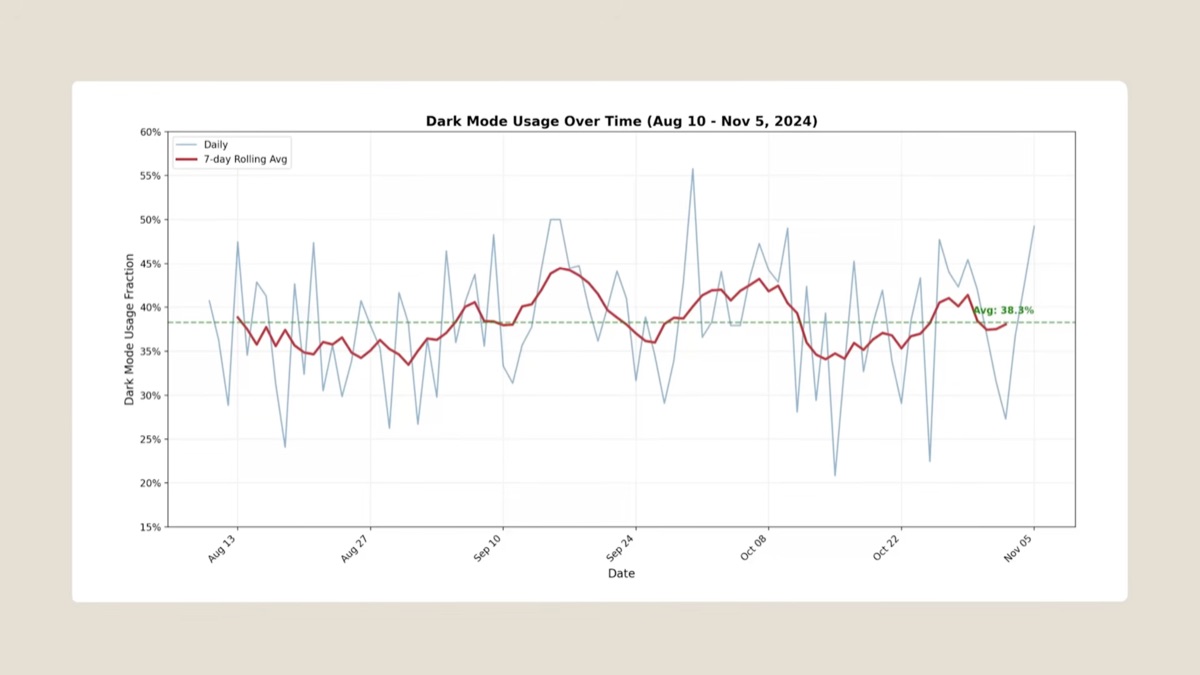

Claude doesn't just return raw numbers. It runs the query, writes the code to plot the data, and produces a finished chart, complete with a 7-day rolling average and an overall average line that Lisa didn't ask for.

Her reaction: "The graph is nicer than what I would have put together if I had done this myself."

Then she iterates. Can you plot it by plan type? Claude asks permission before making changes, she confirms, and the new chart appears.

The whole sequence takes seconds. Lisa notes that doing this herself would have taken hours, if not more.

The practical shift here is significant: PMs no longer have to wait in a queue for data science help on routine questions. That team can focus on harder problems instead.

The second workflow: building test cases at scale

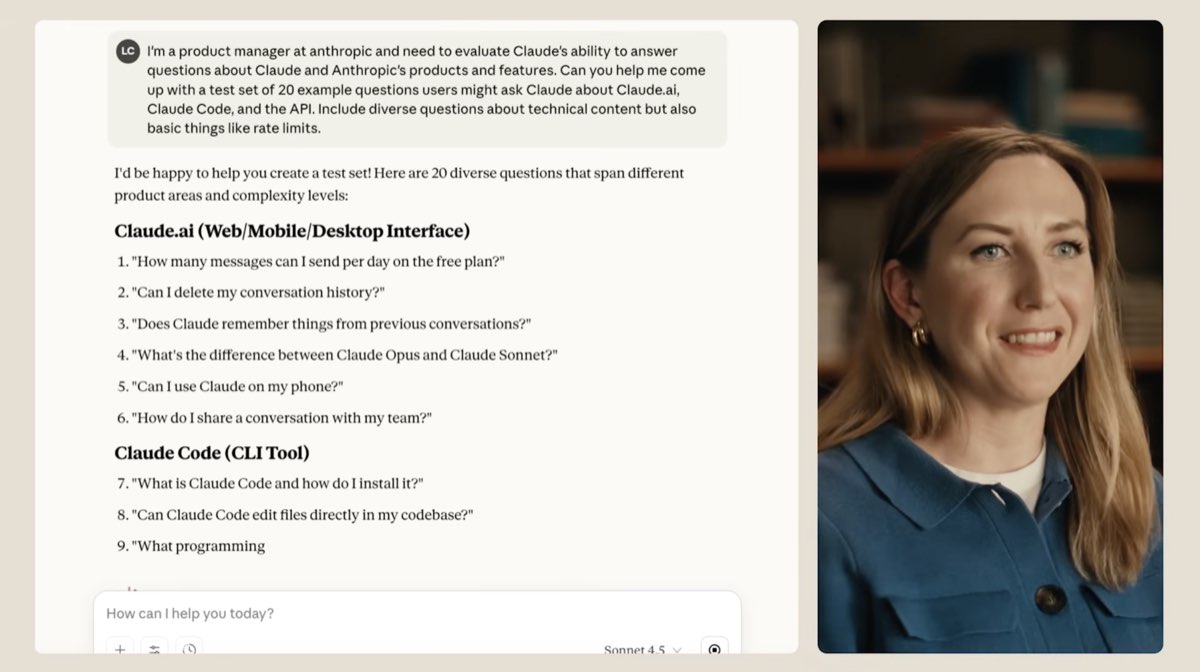

Product quality at Anthropic depends heavily on evals. Evals (short for evaluations) are structured tests that check whether an AI product is behaving the way it should. Think of them as quality control checks for AI systems.

Starting from just a couple of example test cases, Lisa gives Claude a product scenario and asks it to expand the option space. The result: what started as two examples quickly becomes 50.

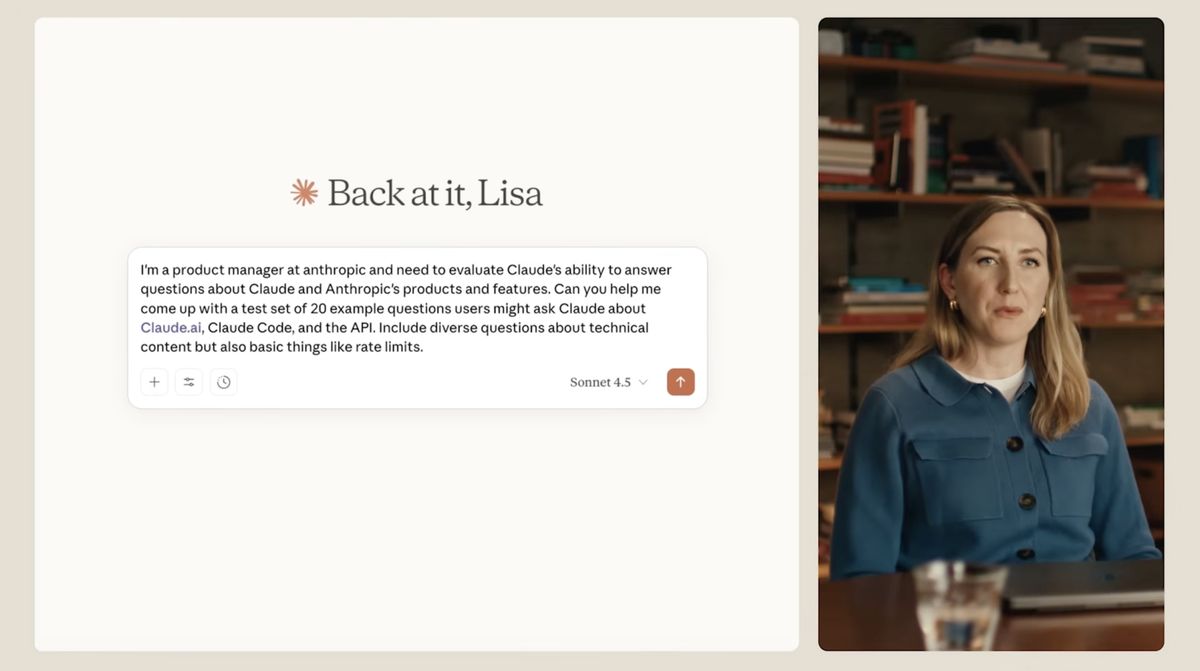

In the Claude.ai interface, Lisa asks Claude to generate 20 test questions about Claude products.

Claude responds with a categorized set of questions covering Claude.ai, Claude Code, and several other product areas.

Before this, building a solid eval set was an engineering task that PMs depended on others to complete. Now it's something Lisa can do in a meeting break. When PMs own their own test suites, product quality stops being reactive and starts being proactive.

The bigger picture: a 41x jump in 16 months

Cat Wu's companion blog post puts these individual workflows in a wider context. According to data from METR (an AI safety research organization that measures what AI models can actually do), AI capability has improved dramatically in a short time.

In October 2024, Claude Sonnet 3.5 new could reliably complete tasks that would take a human about 21 minutes. By February 2026, just 16 months later, Claude Opus 4.6 can handle tasks that would take a human roughly 12 hours. That's a 41x improvement. The system prompt for Claude Code shrank by 20% between those two models, because the newer model needs less hand-holding.

Wu describes a three-tool daily workflow: Claude.ai for conversations and drafts, Claude Code for data analysis and building small tools, and Cowork (Anthropic's internal AI tool for office tasks like email, presentations, and to-do lists). The shift it enables is consistent across teams: less time on coordination and operations, more time on strategy and customer conversations.

Wu also quotes two external PMs who describe similar changes at their own companies. Bihan Jiang, Director of Product at Decagon (an AI customer service company), says his team has gone from writing PRDs (product requirements documents, the written spec a PM creates before building something) to building prototypes directly. Kai Xin Tai, Senior Product Manager at Datadog (a software monitoring platform), says PMs are now expected to bring data to every conversation instead of waiting for analysts to prepare it.

More than automation

Lisa's closing framing is worth quoting directly: "It's more than automation for me. It's things that I wouldn't have otherwise necessarily even been capable of doing independently. So it's actually extending what I can accomplish on my own."

That distinction matters. Automation speeds up what you were already doing. What Lisa describes is different: gaining capabilities she didn't have before. Writing SQL is a skill. Building data visualizations is a skill. Neither is a core PM skill, and neither should have to be if the tools are good enough.

The data pipeline, the eval suite, the quick analysis: none of these required engineering support. They happened when a PM had a question and a tool that could answer it.

Glossary

| Term | Definition |

|---|---|

| BigQuery | Google's cloud database service for large datasets. Lets you store and query huge amounts of data without managing your own servers. |

| MCP (Model Context Protocol) | A standard that lets AI tools connect to external data sources. Like a universal adapter that plugs an AI into a database, file system, or service. |

| Evals (evaluations) | Structured tests that check whether an AI product behaves correctly. Used to measure quality and catch regressions before they reach users. |

| SQL | A query language used to retrieve data from databases. Most data science workflows rely on it, but it takes time to learn and depends on knowing the database structure. |

| Cowork | Anthropic's internal AI tool for everyday office tasks: email, presentations, to-do lists. Part of the three-tool workflow Cat Wu describes in her blog post. |

Sources and resources

Want to go deeper? Watch the full video on YouTube →